Imagen

Note: I’ve recently been aware that Google actually has an image model call Imagen. This post has nothing to do with this. I was unaware of this model when I started working on this project. The naming was purely coincidental.

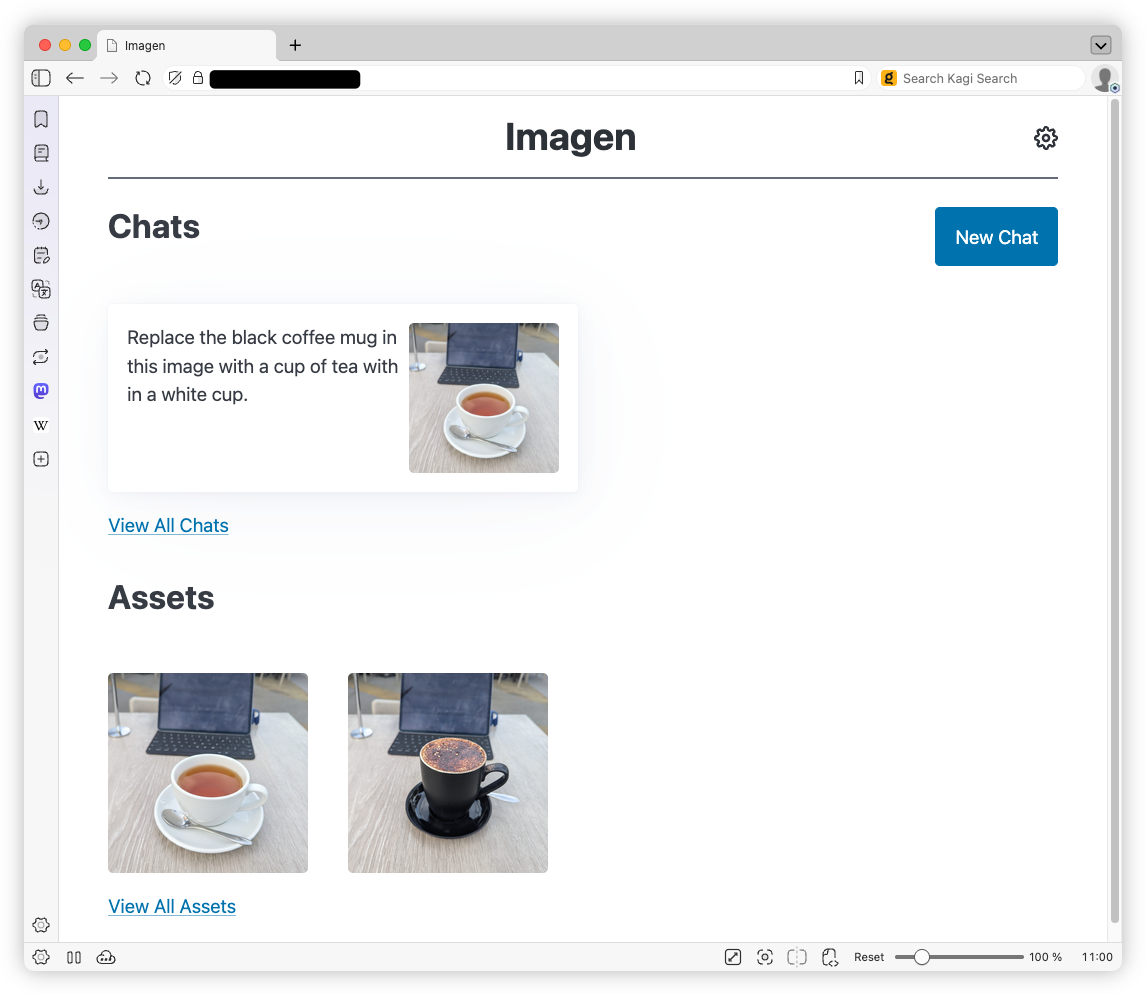

I won’t bore you with any justification on why I actually built this thing. It’s really nothing more than wanting a harness to play with Google’s Nano Banana. Up until now, I’ve been using Blogging Tools for that, but it lacked any ability to request changes to images in a chat-like interface (so called “multi-step flows”), where the context is preserved. So I made Imagen as a replacement.

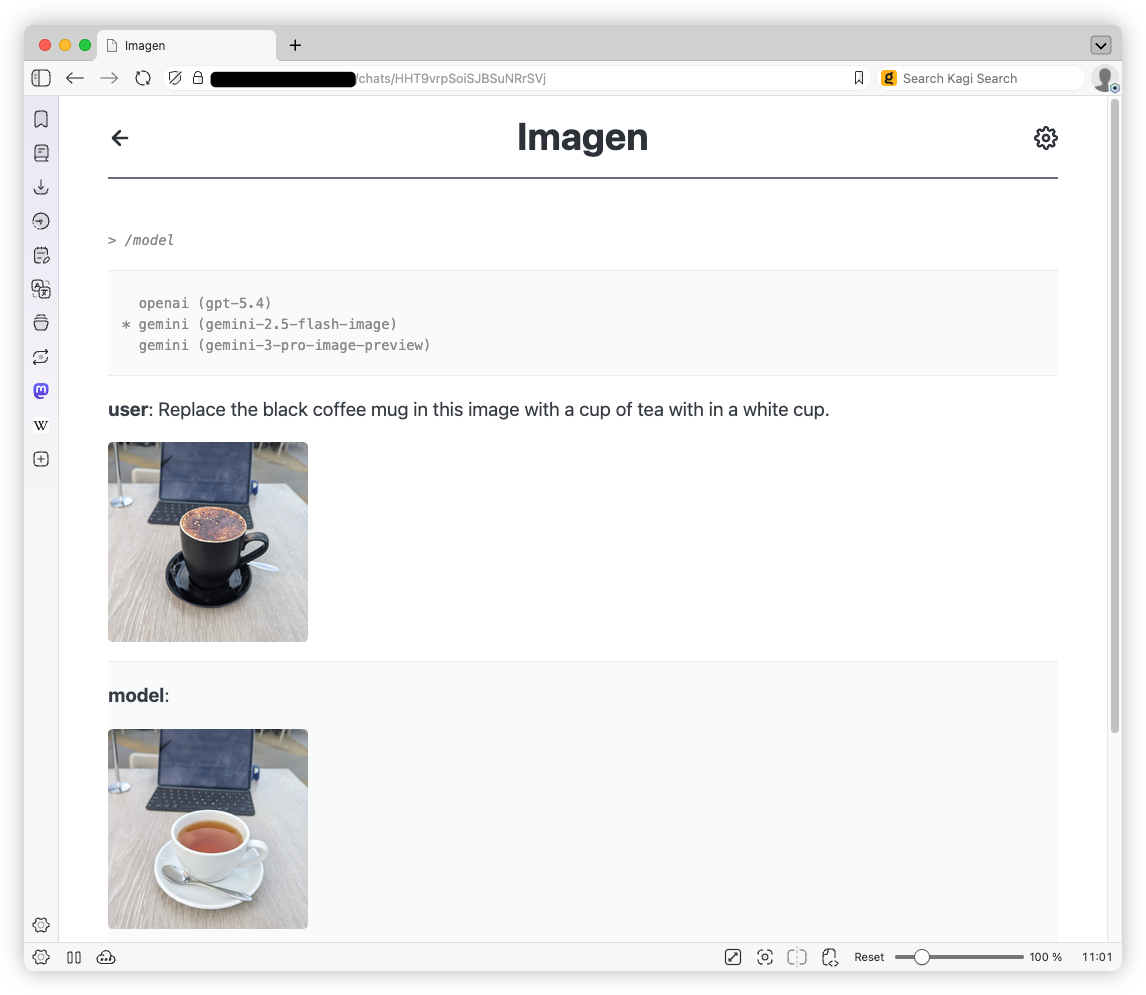

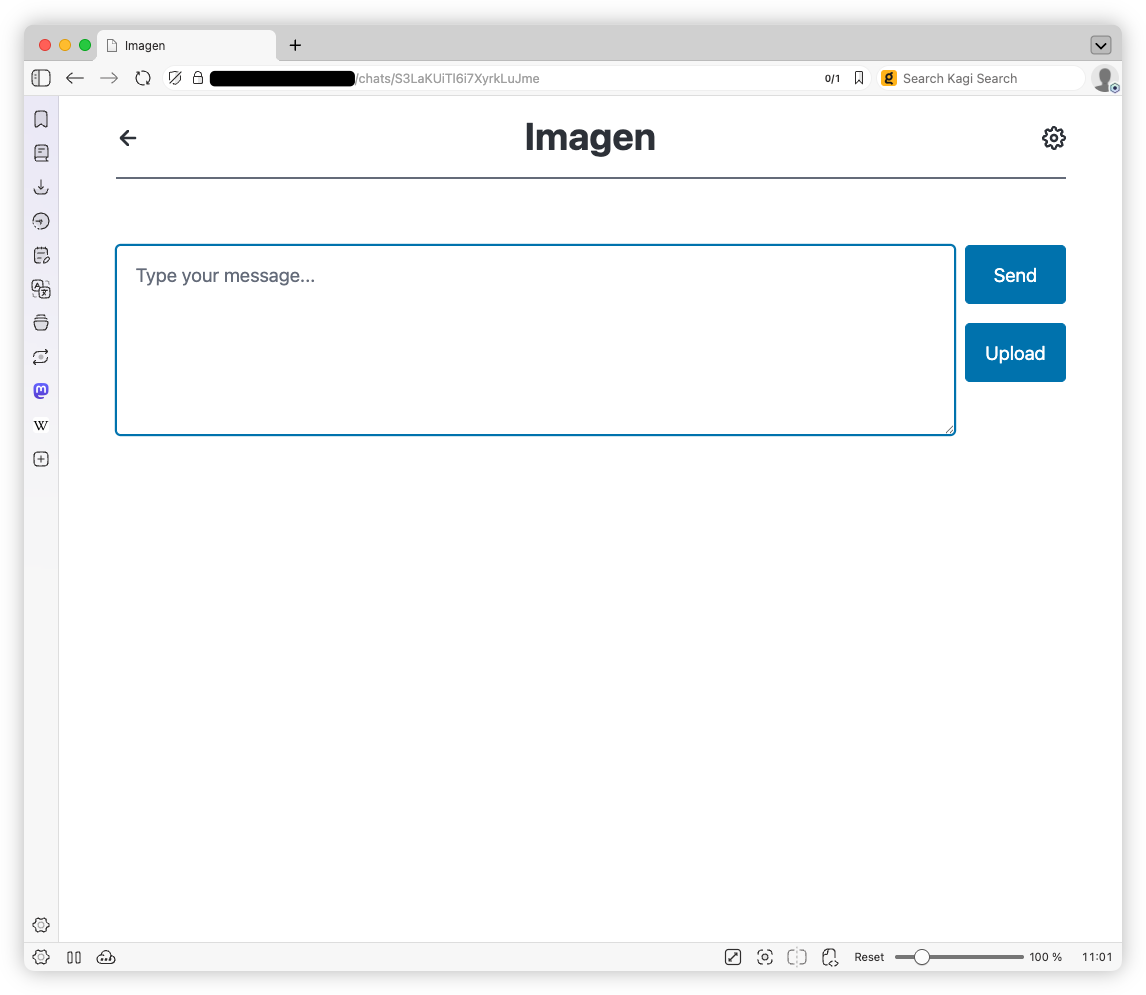

This is a pretty typical chat-based AI model harness. You start a chat requesting an image, maybe uploading one you want modified, click “Send”, and wait for the model to respond. There also exist a bunch of meta commands, entered using the / prefix, that allow you change the model, and retry or undo the last request.

Chats and assets are stored as regular files (assets as regular image files, chats as a Gob-serialised history of the chat) and metadata is managed by a Sqlite3 database. In terms of the tech stack, I’ve settled on a mix that works well for me. A Go backend, using Fiber as the web middleware, and Go templates for server-side rendering. I eschewed Stimulus.js for this one though, deciding to try out HTMX. And it’s a unique way of working. I learnt that to really get the most out of HTMX, you really need to find ways not to use JavaScript to do something.

The chat interface is a good example. While a more traditional frontend would post a message as JSON and rerender the page with a JSON response, posting a chat message here would return a server-side HTML render of the chat interface, which HTMX will swap out. This also include things like the message form itself, which would be rendered disabled from the server template, rather than being controlled by JavaScript. The good thing about that is that if you were to hard-refresh the page, it will appear disabled. No need to run JavaScript on load to detect the state and disable the form: the HTML is the state. As for getting updates while waiting for a message, well that’s just a poll. Nothing too sophisicated here.

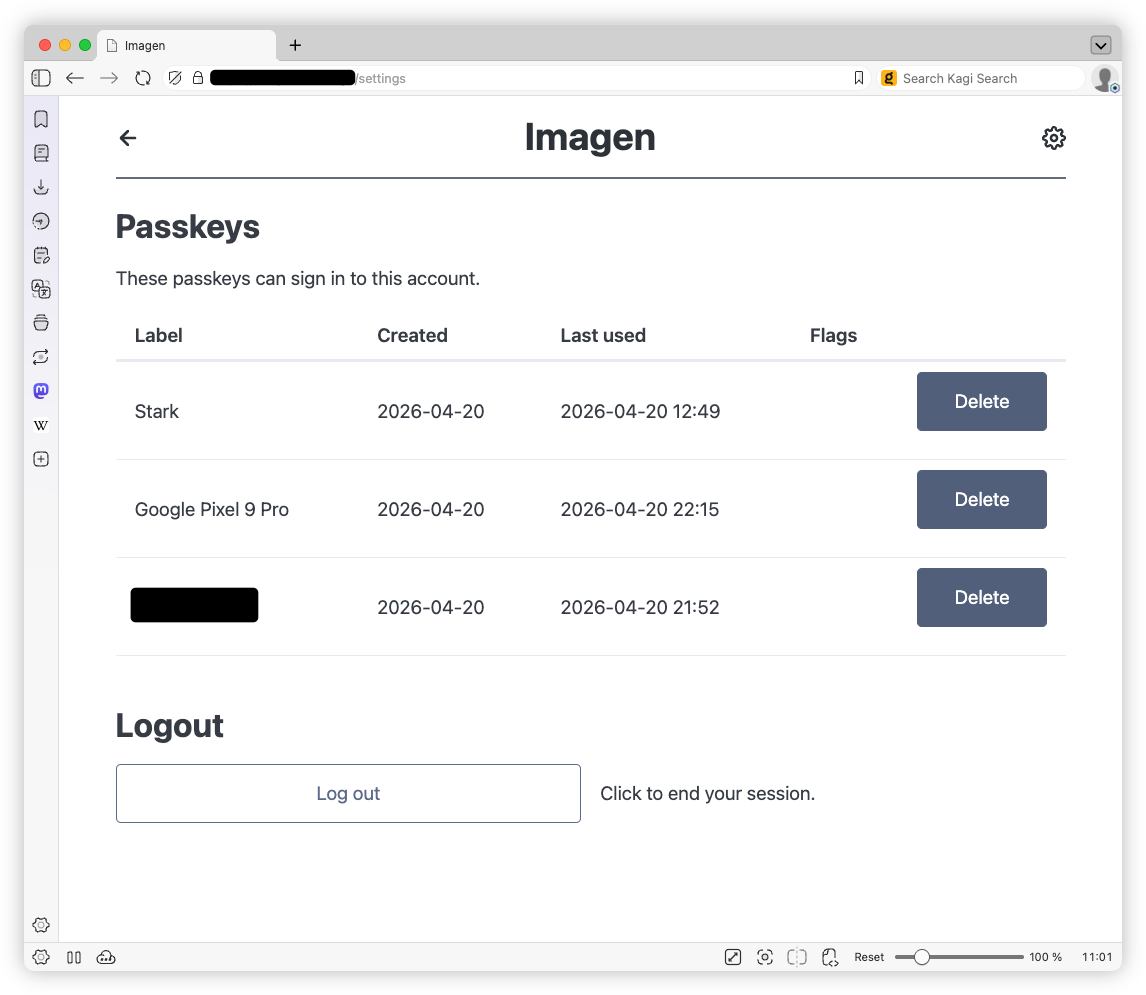

As you can probably guess, much of this was hand-rolled. I did use coding agents to assist with making the passkey authentication flow, the meta commands, and the OpenAI API integration. While I probably could’ve done the last two myself, if there was any justification for using coding agents for anything it was probably adding passkeys. The complexity involved with the passkey integration probably justified the use . And this is probably one area of AI-generated code that I’m more likely to trust the model over my own shoddy code.